Recognizing the Work (Excerpt from AI Wisdom Volume 2: Meta-Principles of Interaction)

May 15! That’s when AI Wisdom Volume 2: Meta-Principles of Interaction is released! You can pre-order the ebook now; but paperback will also be available at Amazon. Other bookstores will get a print version they can sell a couple of weeks afterward.

Excerpt: “Recognizing the Work”

This excerpt is from Chapter 6, “Problem Solving,” in AI Wisdom Volume 2: Meta-Principles of Interaction. The chapter opens with three high-profile failures at Google, Netflix, and Zillow to illustrate how even sophisticated organizations stumble when they misframe problems. It then argues that AI is pushing management-level cognitive skills—problem framing, task allocation, workflow design—down to entry-level workers. “Recognizing the Work” is where the chapter pivots from that argument to its practical foundation.

We don’t even think about it. Most of the time we don’t have a problem framing. We don’t know what our purpose is for that setting, for that moment. Maybe we’re just talking to someone and don’t have an agenda.

AI doesn’t afford that luxury. Interacting with it requires purpose. Delegating work to it requires intention. Any GenAI interaction starts with “what am I trying to do?” As the Google, Netflix, and Zillow examples show, problem characterizations need precision.

What part of the work should go to AI is where much of the rest of this chapter lives. But that question is hard to answer with any specificity unless the types of challenges are distinguished first. Trying to express something, you might be fine letting AI handle editorial work, or even some of the text, but not the argument itself. Trying to make a choice, the AI might be most valuable filling in situational awareness you’re missing. One is delegating editing; the other is delegating retrieval. The nature of the task determines what makes sense to hand off.

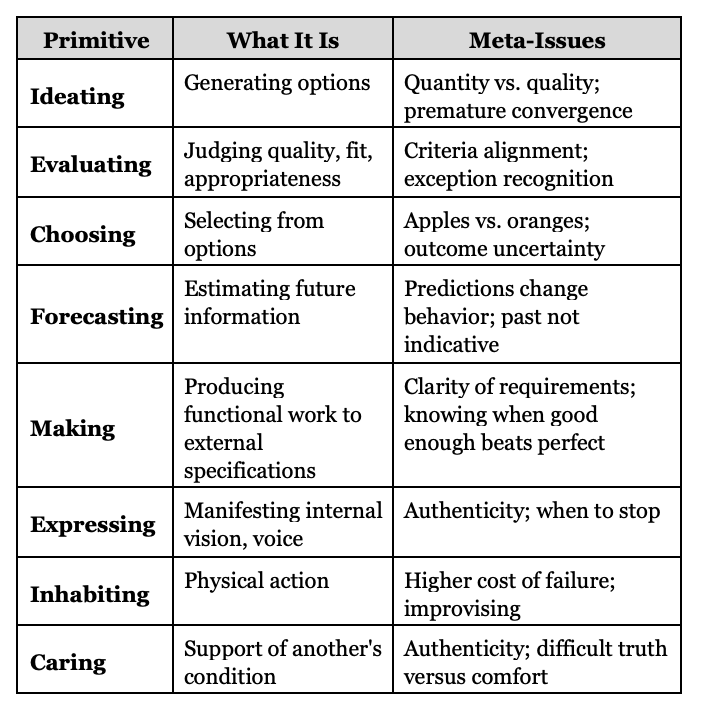

Work can be described in countless ways, but most of the breakdowns don’t map cleanly onto AI allocation. What’s needed is vocabulary that tracks allocation differences. Table 1 offers a set of primitive task types that are inherent to intelligent function, frequently required, and not easily decomposed into something more elemental, though they do have subtypes. Each also carries characteristic meta-tensions.

When you work alone, you don’t consciously separate these components. A skilled teacher planning a unit moves fluidly between generating ideas, evaluating them, choosing directions, predicting student responses, and making materials. The work gels into seamless professional judgment.

Directing work is different. This book is largely about the transition from doing to directing, and when directing you can’t wing it. Especially when your worker is the savant intern who’s smart enough to do meaningful work...but naïvely. You need to know what kind of task you’re assigning, how to best support the AI for that task, and what might go wrong.

Surely there are task categories this list misses, or reasonable debates about the ones it includes. Consider it version 1.0. What matters is having a taxonomy. Vague communication of “<helps/hurts> learning” says little when performance on each task type, and probably subtype, needs to be assessed separately.

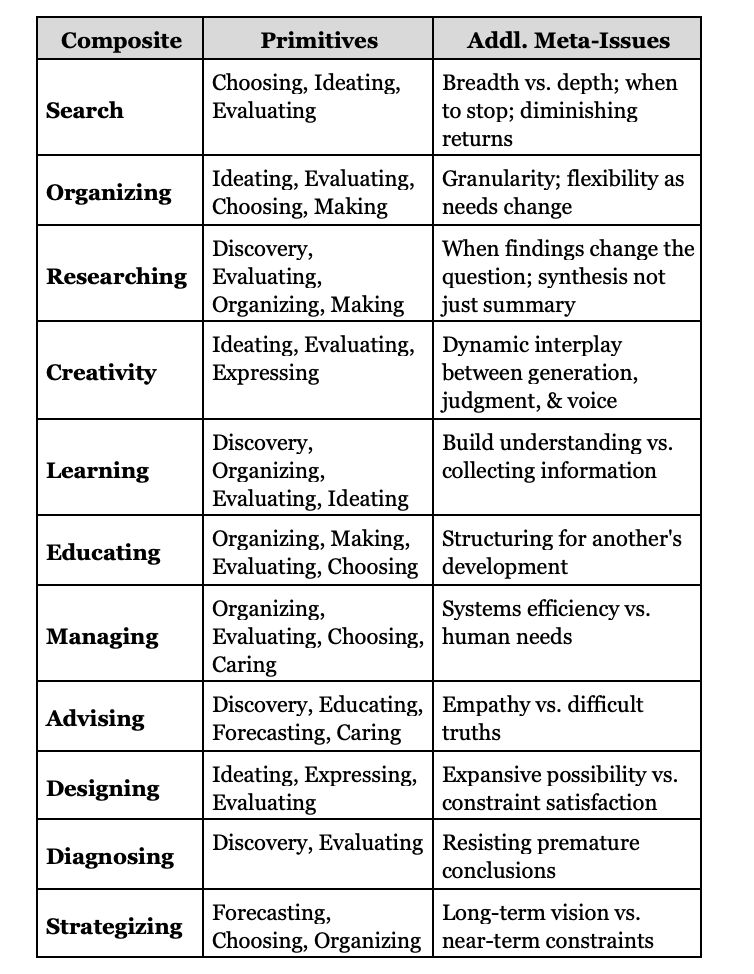

Many activities are composites. They integrate multiple primitives into something larger. Table 2 maps a partial set.

When primitives combine in tight iteration or creative tension, as with the composite task examples, new meta-issues can emerge that don’t belong to any single component. Consider search. It decomposes into ideating strategies, iteratively choosing where to search, and evaluating what’s relevant. But as an integrated activity, search generates tensions that arise because of the combination, such as breadth vs. depth. Similarly, researching isn’t a sequence of discovery, then evaluating, then organizing, then making. It’s the integrated activity of building understanding that creates emergent challenges (e.g., when your findings change your question) not visible at the component level.

As skill develops, the boundaries between the elements blur and they can fuse into simultaneous consideration. That’s ok and will naturally happen. For young learners though, a decomposition process is an appropriate step toward fluency. Besides, some of what is fused in our head needs to be teased apart for potential AI tasking. I’ve added the task type vocabulary to support learning of delegation, not to describe how expert work functions. Decomposition is useful in directing work, not necessarily in how you do it.

Good allocation decisions also require understanding the dimensions that determine when human versus AI intelligence contributes most effectively. That’s next.

©2026 Dasey Consulting LLC